We are ready to help

- Sensors

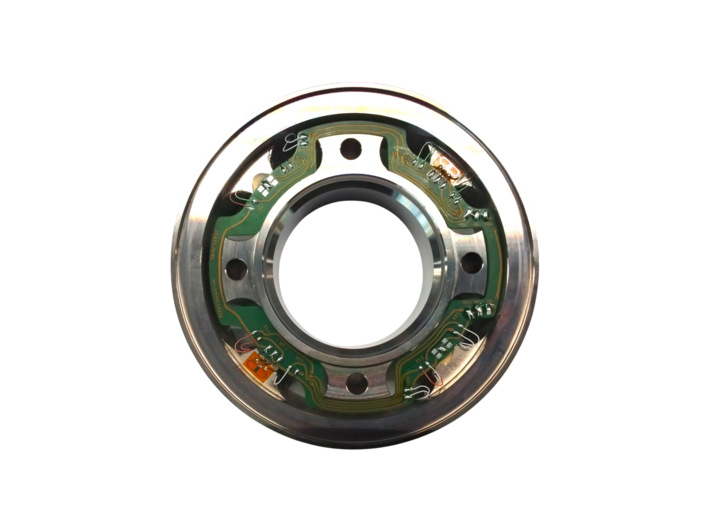

- Force Sensors

- Torque sensors

- Strain Sensors

- Displacement sensors

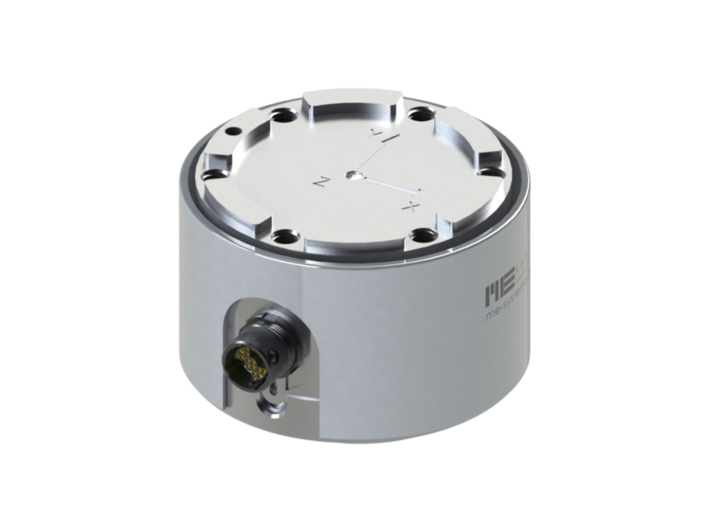

- Acceleration sensors

- Temperature sensors

- Sensor-Accessories

- K6D-Accessories

- clamping box

- K3R-Accessories

- K3D-Accessories

- KM38-Accessories

- measuring case

- PCB Accessories

- KDs accessories

- KR Accessories

- KMz accessories

- DA accessories

- END-OF-LIFE Sensors

- Electronics

- 1 Channel Measuring Amplifier

- Multi Channel Measuring Amplifier

- Multi Channel Measuring Amplifier With Interface

- Multi Channel Measuring Amplifier With Analog Outputs

- Wireless Measuring Amplifier

- Electronics-Accessories

- Strain Gauges

- Foil Strain Gauges

- Semiconductor Gauges

- Straingage-Accessories

- Resistors

- Foils and Tapes

- Tool-Box

- Adhesives

- Surface-Cleaning

- Protective Coatings

- Soldering Terminals

- Wires and Stranded Wires

- Tools

- Solder and Flux

- END-OF-LIFE Strain gauge